Last week, I wrote about building an automated AI marketing system that handles social strategy, email drafts, and SEO rewrites for my wife's eCommerce business. The response was great, but the most common question I received from other engineers wasn't about the prompts — it was about the architecture.

Specifically: How do you build a high-volume automation system that doesn't go bankrupt on API costs?

The trap most developers fall into right now is tight coupling. They build an AI tool by hardcoding calls directly to OpenAI or Anthropic. They lock their application's entire logic to one specific model's quirks. If that provider raises prices, deprecates an endpoint, or a competitor releases a significantly cheaper model, the application breaks or becomes a financial liability.

The solution is an abstraction layer. You must treat the LLM as an interchangeable compute unit, not the core product. The actual value of an AI system lies in the workflow and the interface.

Today, we are opening up the hood on OpenClaw — the architecture I built to solve this — to look at exactly how to decouple your interface from your LLM, and the specific routing logic used to slash API costs in production.

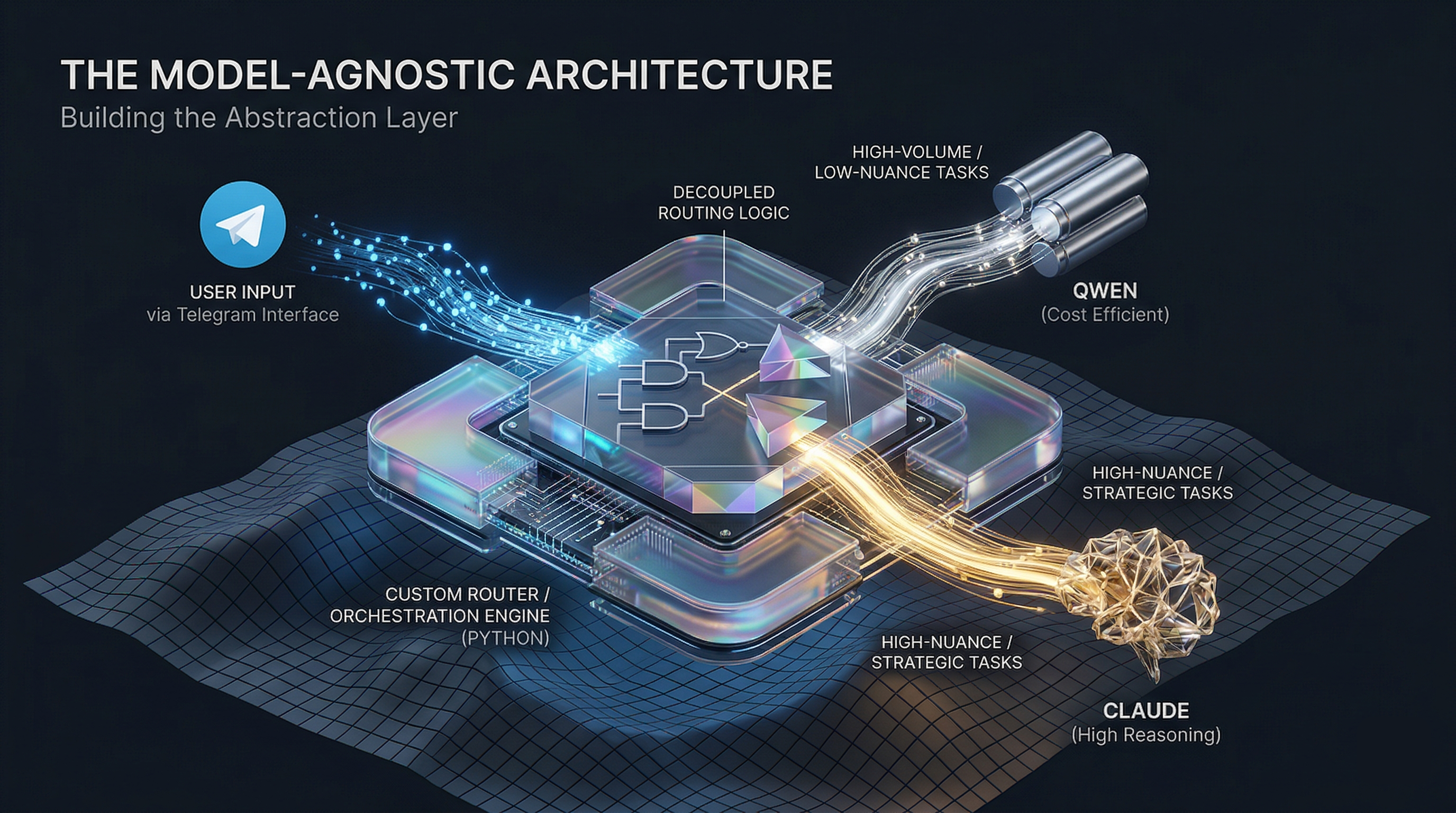

To build a truly model-agnostic system over a single weekend, I broke the architecture down into three distinct layers: The Interface, The Orchestration, and The Router.

Layer 1: The Interface (Telegram API)

The goal was zero user friction. When you are managing inventory and chasing a toddler, you do not have the cognitive bandwidth to log into a new SaaS dashboard or learn prompt engineering parameters.

The interface layer is completely decoupled from the AI. I used the Telegram Bot API.

- The UX: Chloe simply opens an app she already uses and sends a message (e.g., "Draft an email for the new summer line"). It feels exactly like texting a marketing intern.

- The Tech: Telegram hits a webhook on my server with the payload. The bot has absolutely zero awareness of what AI model will process the text. It only knows how to send strings and receive strings.

Layer 2: The Orchestration Engine (Python)

Behind the webhook sits a lightweight Python orchestration layer.

Instead of writing this boilerplate from scratch, I used Claude Code as my primary development environment. By feeding Claude Code the specific constraints of the Telegram API and my desired folder structure, I treated it as a pair programmer with deep project context. It mapped the webhook listeners and data validation in hours.

This engine does three things:

- Parses the incoming Telegram webhook.

- Extracts the intent (Content Strategy, SEO Rewrite, or Email Draft).

- Fetches the necessary system context (brand voice guidelines, current inventory) and packages the final prompt array.

Layer 3: The Cost-Routing Logic (The Secret Sauce)

This is where the model-agnostic design pays off. Token costs compound fast, and the golden rule of production AI is to route tasks to the cheapest model that meets the quality bar.

Instead of sending the packaged prompt straight to an API, the Orchestration Engine sends it to a custom Router class. The Router evaluates the intent and dynamically swaps the underlying model:

- High-Volume / Low-Nuance (

route_to_qwen): Tasks like taking 50 existing product listings and rewriting them for SEO discoverability require strict adherence to a JSON schema, but very little creative reasoning. The router points these at Qwen for a fraction of the cost. - High-Nuance / Strategic (

route_to_claude): Generating a weekly social media content strategy or drafting email campaign copy requires deep reasoning and strict adherence to a specific brand voice. The router sends these to Claude 3.5 Sonnet, absorbing the higher token cost because the strategic quality is non-negotiable.

The key insight: The model you choose is maybe 20% of the problem. The other 80% — the routing logic, the interface, the pipeline, the abstraction layer — is what separates the systems that ship from the systems that just demo well.

The Broader Lesson: Bridging the AI Gap

I have spent my career building AI systems at enterprise scale at AWS, WooliesX, and Linkby. When you are processing petabytes of data, you are obsessed with infrastructure.

But this weekend project was a stark reminder that the rule of scale applies to small businesses, too: Treat your infrastructure as seriously as your algorithm.

If you build the bridge correctly, you can deliver enterprise-grade output quality for the cost of pennies in API calls.

Are you currently hardcoding your AI pipelines to a single model provider, or have you built an abstraction layer? How are you handling model routing and API costs in production?

Drop a comment on LinkedIn — I read every single one, and I'd love to hear how you are solving the cost-routing problem.